Arista's Ethernet Bet

Q4 FY2025 earnings scored against the bull and bear cases

Arista Networks just printed its first billion-dollar quarter. AI networking revenue doubled to $1.5B in FY2025 and management guided $3.25B for 2026. Doubling again. The stock trades at ~40x forward earnings.

Is that justified? Or is Arista priced for perfect execution in a market that could stumble?

The answer depends on six specific debates: Ethernet vs. InfiniBand, white-box competition, customer concentration, gross margins, supply chains, and valuation.

I scored each one against Q4 earnings evidence. But first, you need to understand why the Ethernet TAM is expanding faster than most investors realize.

Ethernet’s Opening Is Wider Than You Think

Nvidia has historically dominated AI cluster networking with a vertically integrated stack. NVLink for scale-up, InfiniBand for scale-out, CUDA libraries like NCCL to make it all programmable. The famous Mellanox acquisition! Nvidia’s networking segment alone generated $8.2B in Q3 FY2026. Up 162% year-over-year!

But two shifts underway are expanding the TAM in Ethernet’s direction. (Read GPU Networking Basics Part 3 if you need a refresher on scale-up vs. scale-out.)

First, Ethernet is making inroads in scale-out. InfiniBand was purpose-built for ultra-low-latency distributed computing, but Ethernet has been closing the gap through RoCE (RDMA over Converged Ethernet) and a new generation of lossless Ethernet fabrics designed for AI. Nvidia itself launched Spectrum-X, its own Ethernet-for-AI platform, and Meta, Microsoft, Oracle, and xAI are all building on it. But Spectrum-X isn’t the only game in town, as hyperscalers are also choosing vendor-neutral Ethernet fabrics. The Ultra Ethernet Consortium is standardizing this.

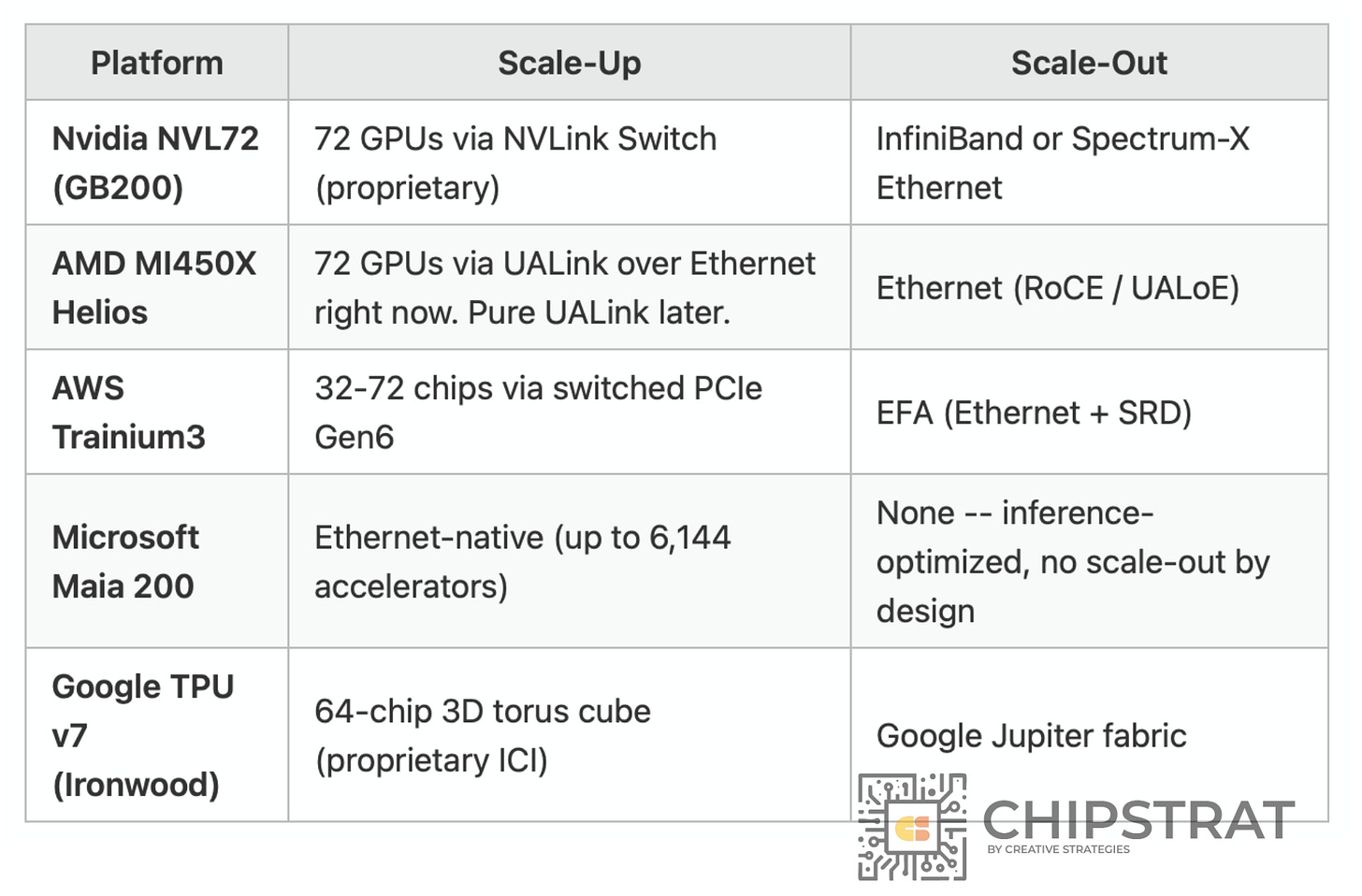

Second, scale-up is no longer Nvidia-only. Scale-up used to mean NVLink. Only game in town. But as I covered in GPU Networking Part 4: Year End Wrap, every major XPU vendor now has its own scale-up architecture, and several are building on Ethernet:

AMD Helios uses Ethernet for both scale-up and scale-out. Microsoft Maia 200 is Ethernet-native for scale-up. AWS Trainium3 uses Ethernet for scale-out. Even Nvidia now offers Spectrum-X Ethernet alongside InfiniBand. As accelerator vendors build on Ethernet and hyperscalers standardize on it, more of the AI cluster fabric shifts into domains served by Ethernet switch vendors.

Take Microsoft’s Maia 200. As Saurabh Dighe explained in our interview: “We have not invested in a scale-out network. We have invested in an inference-driven chip. We have invested in taking a scale-up approach more than a scale-out approach. That’s brought our cost down.” Microsoft deliberately chose Ethernet-native scale-up and skipped proprietary scale-out entirely, optimizing for inference cost per token. That design choice routes directly through standard Ethernet switches. Pun intended.

Agentic AI Adds Another Layer of Demand

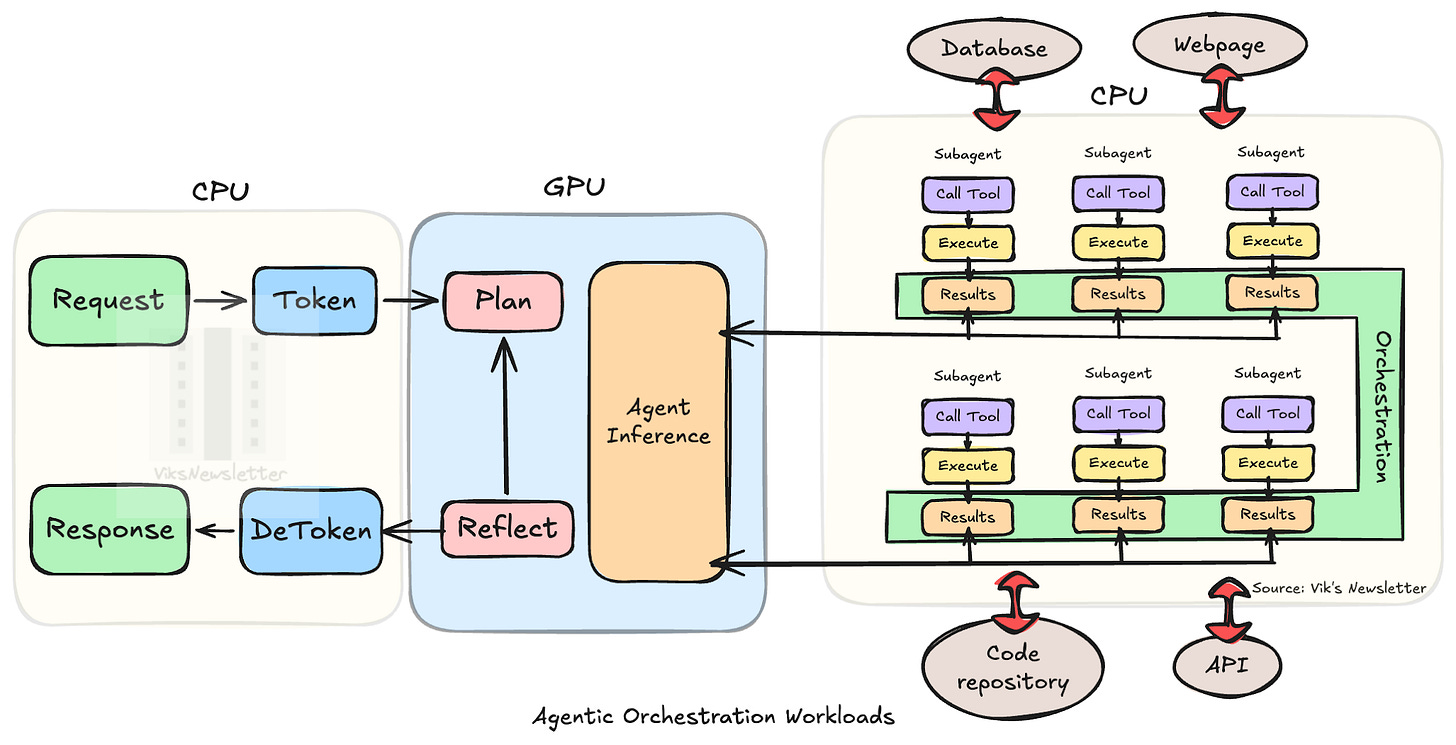

GPUs don’t operate in isolation. Every GPU needs a host CPU for orchestration, data loading, and network management. Inside a rack, CPUs and GPUs are increasingly tightly coupled; Nvidia’s GB200/GB300 systems integrate them via NVLink-C2C into a single coherent unit. But agentic AI adds another layer of Ethernet demand on top of that.

In agentic workflows, a CPU-heavy orchestration tier sits outside the GPU racks running agent loops, tool calls, sub-agent coordination, and dispatching inference requests to GPU pools over the network. Vik Sekar’s the CPU bottleneck in agentic AI illustrates:

That’s a new class of traffic between CPU server racks and GPU clusters, all flowing over Ethernet. Add in scale-across opportunities (connecting clusters to clusters), and the networking TAM keeps expanding.

So Where Does Arista Stand?

Ethernet is gaining share in scale-out, making inroads in scale-up, and now serving a new orchestration tier driven by agentic AI.

AI clusters need more ports per rack, faster speeds (400G → 800G → 1.6T), and less oversubscription than traditional cloud networks, meaning more switches per cluster at higher ASPs.

And Arista shipped 150 million Ethernet ports in FY25, grew revenue from $5.9B to $9.0B in two years, and just guided for $11.25B in 2026.

But… Arista doesn’t design its own chips. It outsources manufacturing. Its two largest customers account for 42% of revenue. And its CEO just called memory costs “horrendous”.

So is the stock a compounding machine riding a structural Ethernet tailwind? Or is it priced for perfection in a business with real concentration risk, margin pressure, and a supply chain that can’t keep up with demand?

I identified six specific debates that drive Arista’s valuation and scored each one against Q4 FY2025 earnings evidence. Here’s what’s behind the paywall:

What Arista actually sells: the business units, the EOS software moat, and why hyperscalers keep buying blue-box switches when white-box is cheaper

The Six Debates: bull vs. bear on Ethernet vs. InfiniBand, white-box competition, customer concentration, gross margins, the “golden screw” supply chain, and whether ~40x P/E is justified

Earnings Validation Scorecard: each debate scored against Q4 results, with key management quotes